Hi Team

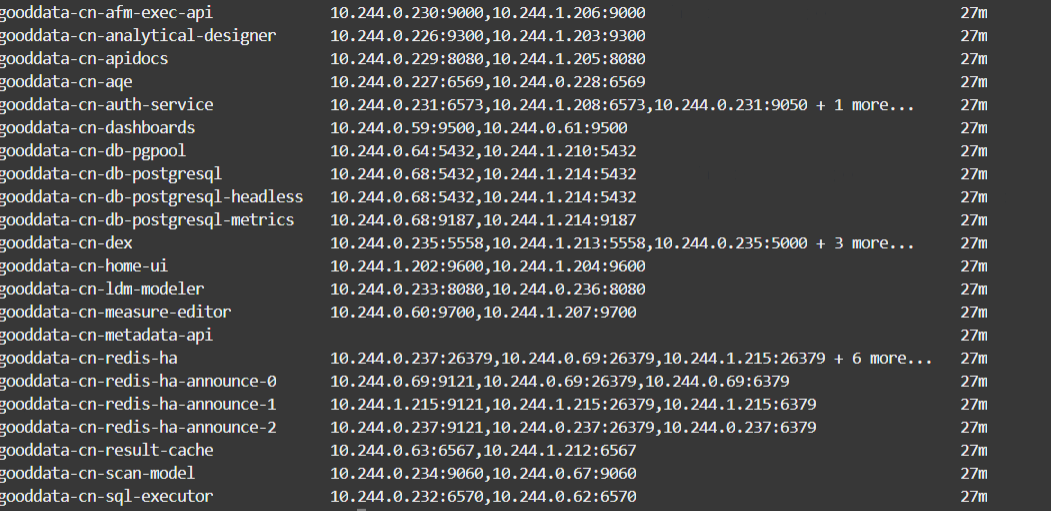

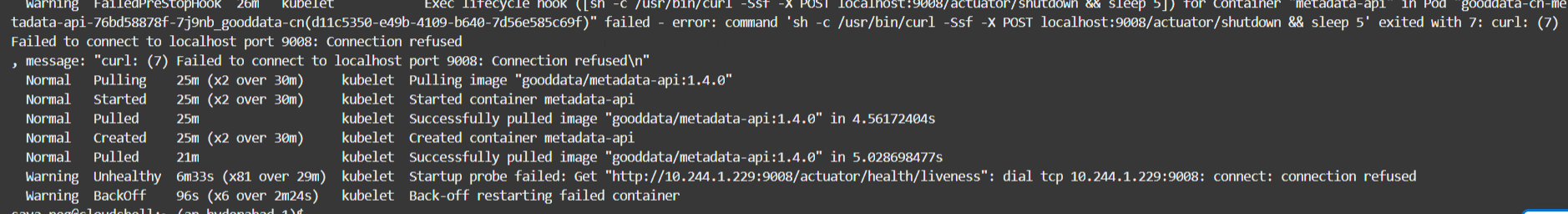

While trying to install gooddata-cn version 1.3.0 on our cloud provider, all the pods are coming up and running properly. But while accessing the application or an organisation api, we get 404 Not found error.

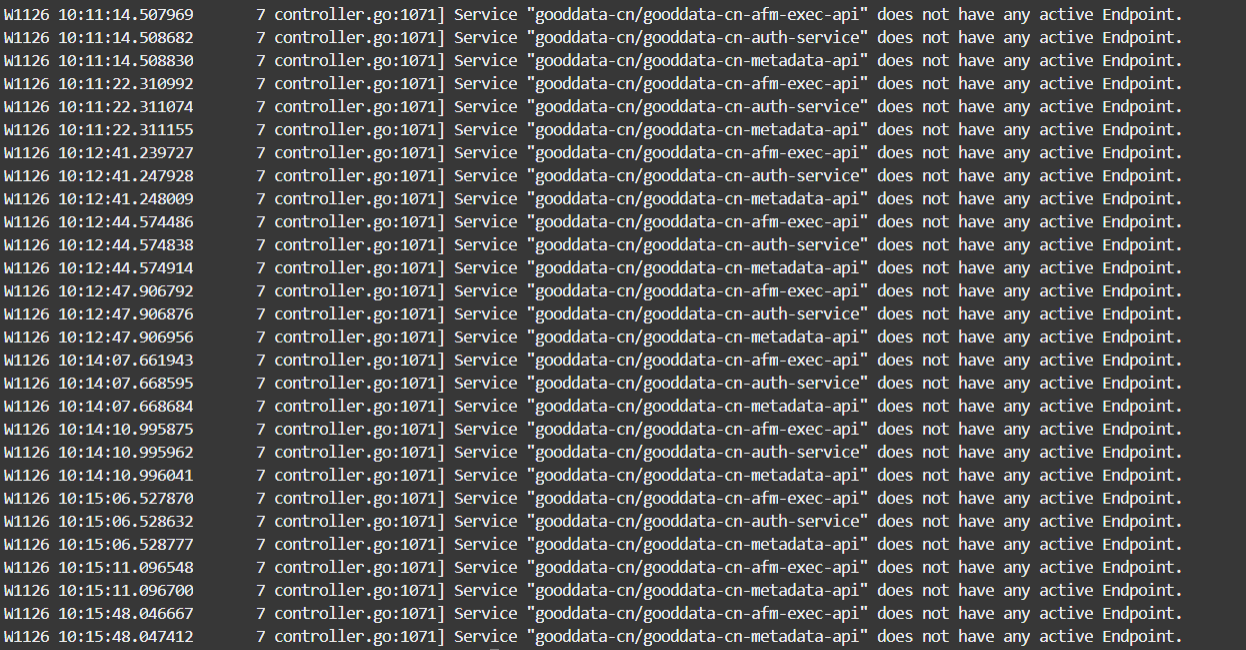

So,while checking the logs of the ingress-ngnix pods, it says as below.

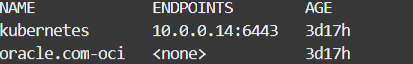

when we check the endpoints using kubectl get endpoints

We are using oracle cloud for the development environment, as initial testing will be done here. Please let me know whether we are missing any configurations.