Hi all, I am having trouble trying to set up my Gooddata.cn deployment on our GCP infrastructure. I successfully set up an instance (GD v2.0) in my other region a month ago. I am following the deployment guide but this time I am using GD.cn V2.1. I cannot figure out what went wrong.

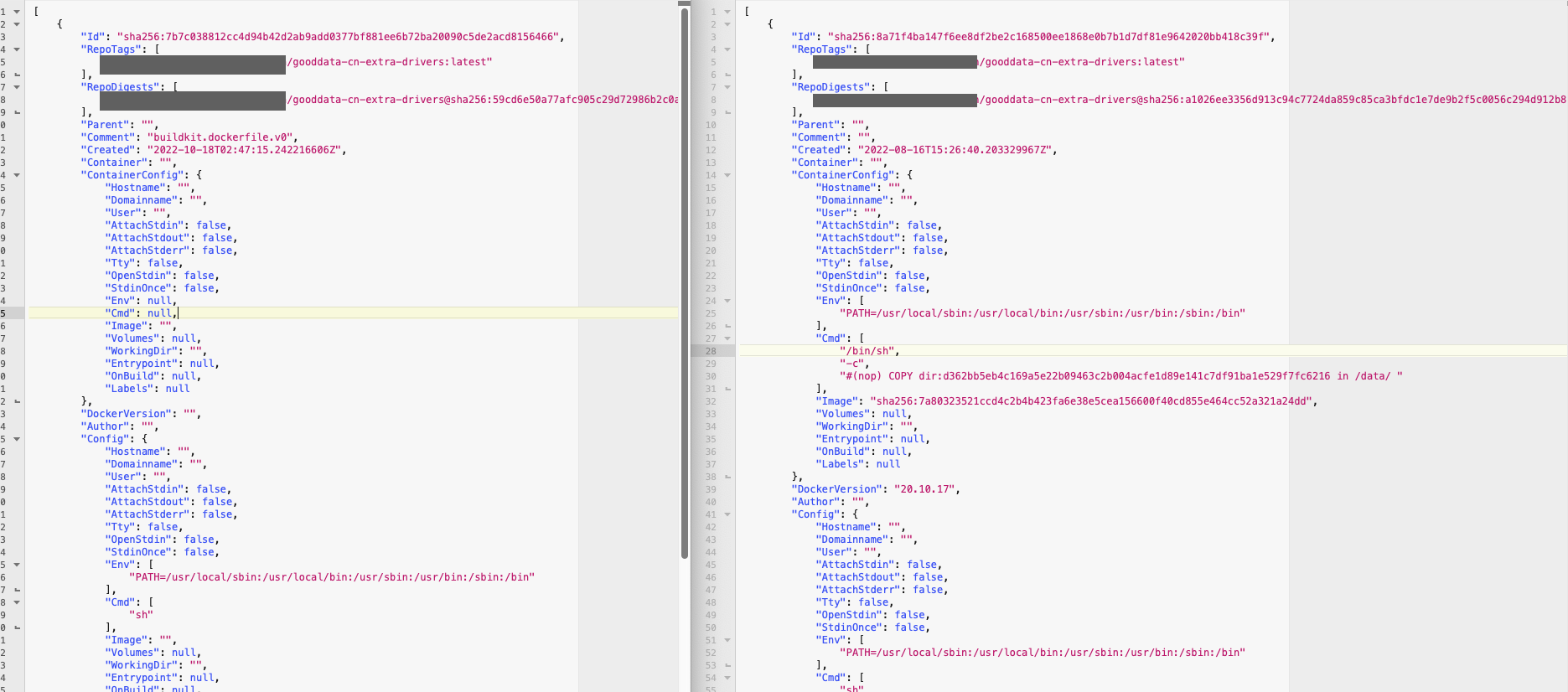

This is the point I got to. Deployed Pulsar, Nginx and GoodData.cn. My Pod for gooddata-cn-sql-executor is not running due to CrashLoopBackOff. And I can see the error happened when trying to run the copy-extra-drivers container. I am using BigQuery in this case. I built my image using busybox and copy drivers by following the exact same guide that worked for me last time. I used kubectl to describe the log for this container and there isn’t much lead for me. Here it is.

Name: gooddata-cn-sql-executor-5dffdbd85b-52vmt

Namespace: gooddata-cn

Priority: 0

Node: <MASKED>

Start Time: Mon, 17 Oct 2022 15:06:17 +0800

Labels: app.kubernetes.io/component=sqlExecutor

app.kubernetes.io/instance=gooddata-cn

app.kubernetes.io/name=gooddata-cn

pod-template-hash=5dffdbd85b

Annotations: prometheus.io/path: /actuator/prometheus

prometheus.io/port: 9101

prometheus.io/scrape: true

Status: Pending

IP: <MASKED>

IPs:

IP: <MASKED>

Controlled By: ReplicaSet/gooddata-cn-sql-executor-5dffdbd85b

Init Containers:

copy-extra-driver:

Container ID: containerd://fcb52b9bdf0170f233410430be82fa8680e28960a93b536b3541f232d7a52960

Image: <MASKED>/gooddata-cn-extra-drivers:latest

Image ID: <MASKED>/gooddata-cn-extra-drivers@sha256:ff8b4ef16e4242dda027f3c0c4cda937ab58a36bd10abd11498e7b9ca4473bfc

Port: <none>

Host Port: <none>

Command:

cp

-r

/data/.

/app/extra-drivers/

State: Waiting

Reason: CrashLoopBackOff

Last State: Terminated

Reason: Error

Exit Code: 1

Started: Mon, 17 Oct 2022 15:19:39 +0800

Finished: Mon, 17 Oct 2022 15:19:39 +0800

Ready: False

Restart Count: 7

Limits:

cpu: 1500m

ephemeral-storage: 300Mi

memory: 900Mi

Requests:

cpu: 150m

ephemeral-storage: 300Mi

memory: 600Mi

Environment: <none>

Mounts:

/app/extra-drivers from drivers (rw)

check-postgres-db:

Container ID:

Image: gooddata/tools:2.1.0

Image ID:

Port: <none>

Host Port: <none>

Command:

/bin/bash

-c

Args:

until pg_isready; do sleep 2; done; if [ "$(psql -Atq -c "select 1 from pg_database where datname = 'execution'")" != "1" ] ; then

createdb execution ;

fi ;

State: Waiting

Reason: PodInitializing

Ready: False

Restart Count: 0

Limits:

cpu: 1500m

ephemeral-storage: 300Mi

memory: 900Mi

Requests:

cpu: 150m

ephemeral-storage: 300Mi

memory: 600Mi

Environment:

PGHOST: <MASKED>

PGPORT: 5432

PGUSER: postgres

PGDATABASE: postgres

PGPASSWORD: <set to the key 'postgresql-password' in secret 'gooddata-cn-postgres-password'> Optional: false

SQLEXEC_PGPASSWORD: <set to the key 'postgresql-password' in secret 'gooddata-cn-postgres-password'> Optional: false

Mounts: <none>

Containers:

sql-executor:

Container ID:

Image: gooddata/sql-executor:2.1.0

Image ID:

Ports: 6570/TCP, 9101/TCP

Host Ports: 0/TCP, 0/TCP

State: Waiting

Reason: PodInitializing

Ready: False

Restart Count: 0

Limits:

cpu: 1500m

ephemeral-storage: 300Mi

memory: 900Mi

Requests:

cpu: 150m

ephemeral-storage: 300Mi

memory: 600Mi

Liveness: http-get http://:9101/actuator/health/liveness delay=30s timeout=5s period=10s #success=1 #failure=5

Readiness: http-get http://:9101/actuator/health/readiness delay=30s timeout=10s period=10s #success=1 #failure=5

Startup: http-get http://:9101/actuator/health/liveness delay=30s timeout=5s period=10s #success=1 #failure=12

Environment:

JDK_JAVA_OPTIONS: -XX:+ExitOnOutOfMemoryError

NODE_IP: (v1:status.hostIP)

POD_NAME: gooddata-cn-sql-executor-5dffdbd85b-52vmt (v1:metadata.name)

NAMESPACE: gooddata-cn (v1:metadata.namespace)

LOGGING_APPENDER: json

SPRING_MAIN_BANNER_MODE: off

SPRING_CONFIG_ADDITIONAL_LOCATION: classpath:git.properties

SPRING_ZIPKIN_ENABLED: false

ZIPKIN_HOST: jaeger-collector.monitoring

ZIPKIN_PORT: 9411

PULSAR_SERVICEURL: pulsar://pulsar-broker.pulsar:6650

PULSAR_ADMINURL: http://pulsar-broker.pulsar:8080

PULSAR_CONSUMERS_SELECT_TOPIC: gooddata-cn/gooddata-cn/sql.select

PULSAR_CONSUMERS_SELECT_DEAD_LETTER_TOPIC: gooddata-cn/gooddata-cn/sql.select.DLQ

PULSAR_CONSUMERS_DATA_SOURCE_CHANGE_TOPIC: gooddata-cn/gooddata-cn/data-source.change

PULSAR_CONSUMERS_CACHES_GARBAGE_COLLECT_TOPIC: gooddata-cn/gooddata-cn/caches.garbage-collect

GRPC_RAWCACHE_HOST: gooddata-cn-result-cache-headless

GRPC_RAWCACHE_PORT: 6567

GRPC_LICENSE_HOST: gooddata-cn-auth-service-headless

GRPC_LICENSE_PORT: 6573

GRPC_DATASOURCE_HOST: gooddata-cn-metadata-api-headless

GRPC_DATASOURCE_PORT: 6572

SPRING_DATASOURCE_URL: jdbc:postgresql://<MASKED>:5432/execution

BANNED_JDBC_URLS: jdbc:postgresql://<MASKED>:5432/dex

jdbc:postgresql://<MASKED>:5432/md?reWriteBatchedInserts=true

SPRING_DATASOURCE_USERNAME: postgres

SPRING_DATASOURCE_PASSWORD: <set to the key 'postgresql-password' in secret 'gooddata-cn-postgres-password'> Optional: false

LOG4J_ASYNC_LOGGER_RING_BUFFER_SIZE: 262144

GDC_TELEMETRY_ENABLED: true

GDC_TELEMETRY_SITE_ID: 2

LIMIT_MAX_RESULT_RAW_BYTES: 100000000

GRPC_SERVER_MAX_CONNECTION_AGE: 300

GRPC_SERVER_PERMIT_KEEP_ALIVE_TIME: 25

GRPC_SERVER_PERMIT_KEEP_ALIVE_WITHOUT_CALLS: true

Mounts:

/app/extra-drivers from drivers (rw)

Conditions:

Type Status

Initialized False

Ready False

ContainersReady False

PodScheduled True

Volumes:

drivers:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

Medium:

SizeLimit: <unset>

QoS Class: Burstable

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 14m default-scheduler Successfully assigned gooddata-cn/gooddata-cn-sql-executor-5dffdbd85b-52vmt to gke-<MASKED>-default-pool-dc2c3081-6dn5

Normal Pulled 13m kubelet Successfully pulled image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest" in 42.46204614s

Normal Pulled 12m kubelet Successfully pulled image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest" in 1m13.368103313s

Normal Pulled 12m kubelet Successfully pulled image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest" in 1.740437329s

Normal Created 11m (x4 over 13m) kubelet Created container copy-extra-driver

Normal Pulled 11m kubelet Successfully pulled image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest" in 1.619845639s

Normal Started 11m (x4 over 13m) kubelet Started container copy-extra-driver

Normal Pulled 10m kubelet Successfully pulled image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest" in 1.856558262s

Normal Pulling 9m6s (x6 over 14m) kubelet Pulling image "<MASKED>/gd-cn/gooddata-cn-extra-drivers:latest"

Warning BackOff 4m7s (x38 over 12m) kubelet Back-off restarting failed container

Best answer by Robert Moucha

View original