Hi Their,

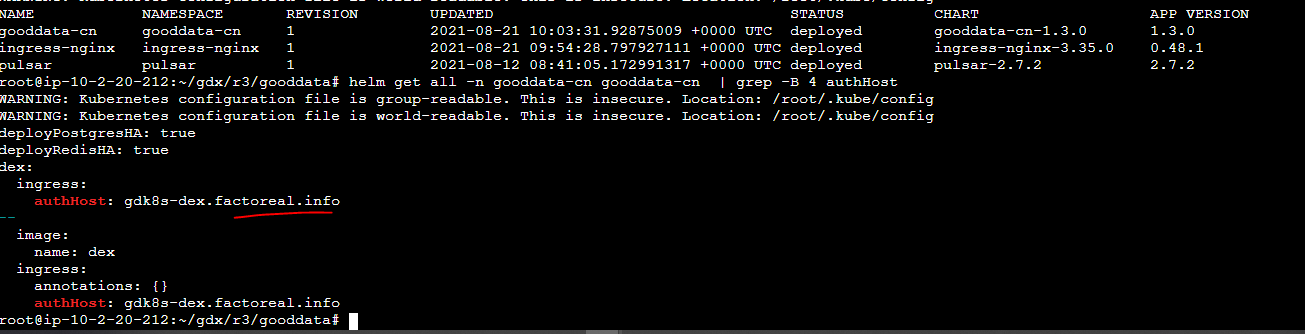

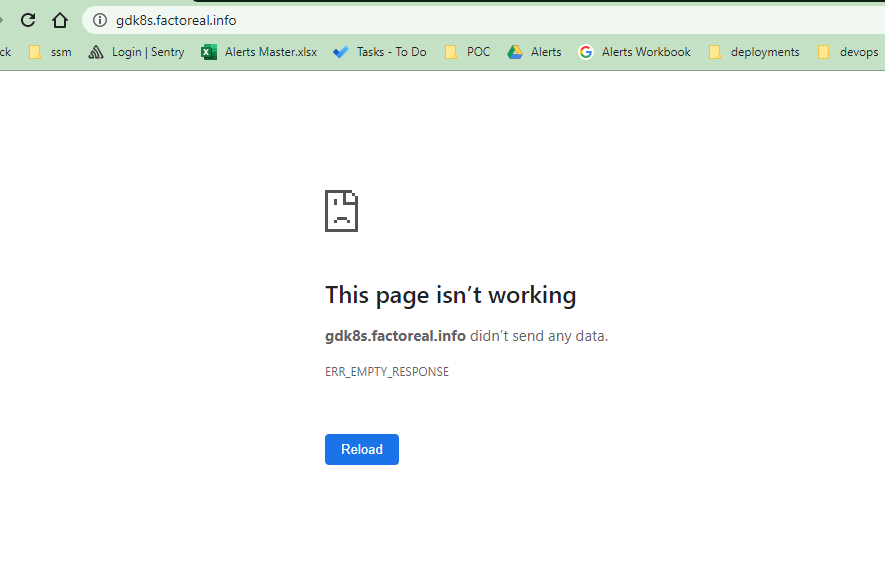

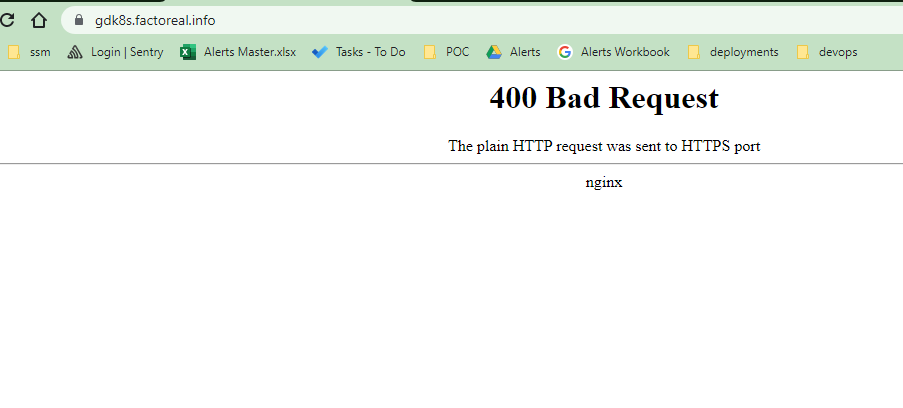

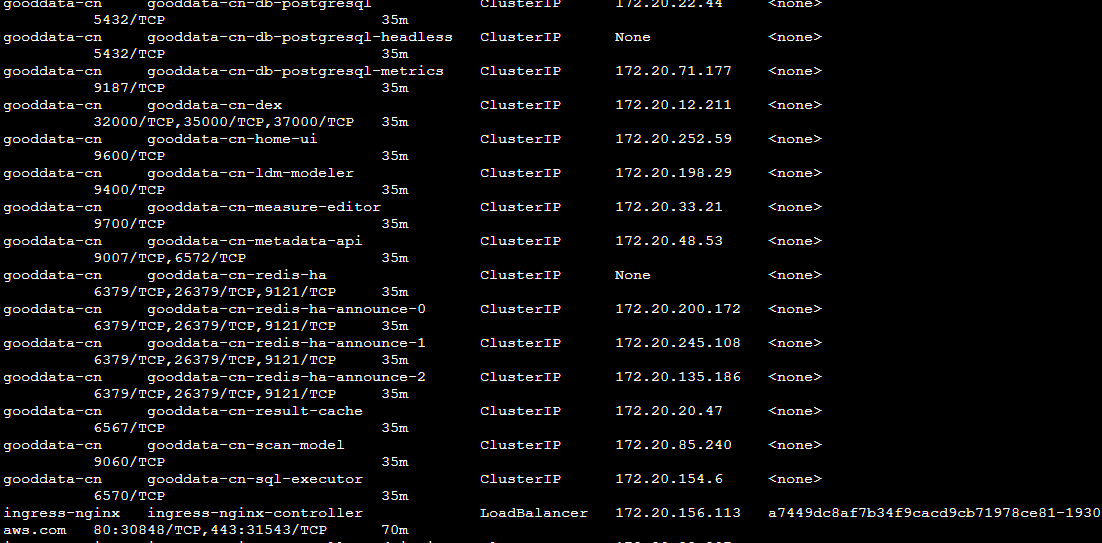

We have facing DEX redirection to localhost when we visit the home / url after fresh standard installation on AWS K8s

Plese kindly find the attachment our deployment yaml’s used for below deployment

URL: http://gd-k8s.factoreal.info

Note: Attached file does not have the valid license

Thanks

Ashok

@0fffh

Best answer by Milan Sladky

View original